By Andrew Tan

I was at a conference last year when a data architect — Fortune 500, big team, serious budget — pulled me aside and told me a story I've heard too many times now.

They spent 14 months migrating to Kafka. Hired a new team. Stood up new infrastructure. Built a whole new on-call rotation. The works.

Six months after launch, they quietly moved half the pipelines back to batch.

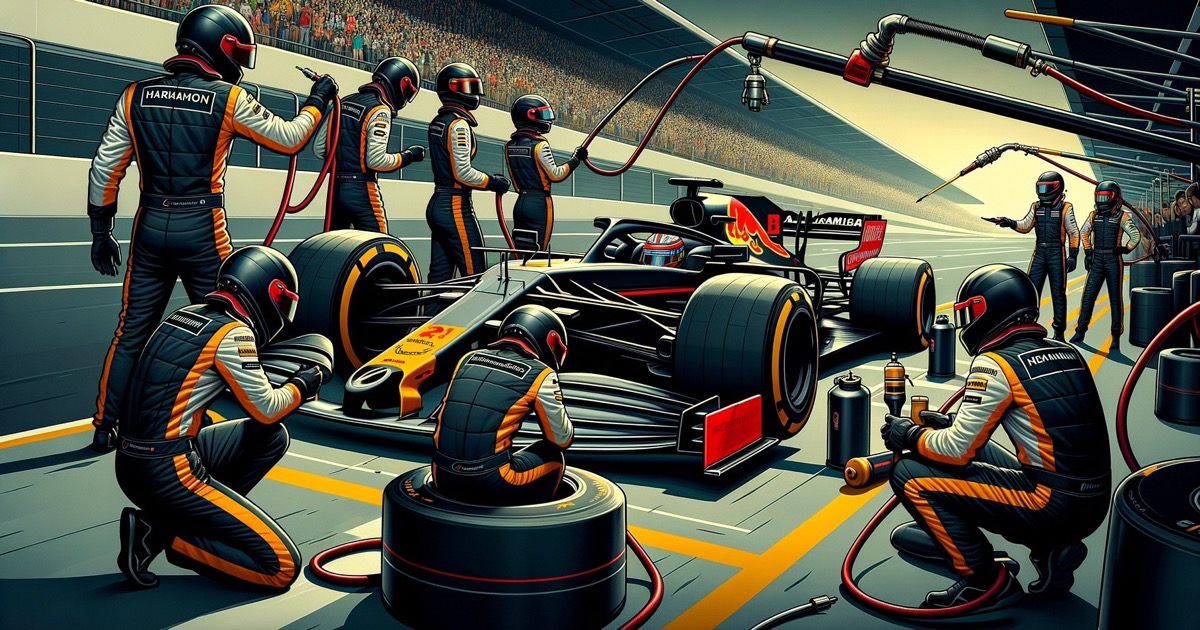

Kafka didn't break. It worked fine. The problem was dumber than that: nobody used the real-time data. The dashboards still got checked at 9 AM. Reports still ran weekly. The ML models still retrained overnight. They'd built a Formula 1 car to drive to the grocery store.

The Conference Talk That Launched a Thousand Migrations

Here's how it usually starts. Someone on the team watches a conference talk — probably at 2x speed on YouTube — where a FAANG engineer describes their real-time pipeline. Billions of events per second. Sub-millisecond latency. Dashboards updating like the Matrix.

That engineer comes back to the office inspired. "We should do this." The team nods. The CTO loves the word "real-time" in the quarterly roadmap. A Jira epic is born.

What nobody asks: does anyone in this building actually need data faster than they're getting it today?

Not "would it be nice." Not "it sounds more modern." Does a specific person, making a specific decision, get measurably worse outcomes because the data is an hour old instead of a second old?

Nine times out of ten, the honest answer is no. And the one time it's yes, it's usually one pipeline — not the whole platform.

The Grocery List

Before you migrate anything, make a list. I'm serious — open a spreadsheet. For each pipeline, answer three questions:

Who consumes this data? A name. A team. A system. If you can't name the consumer, the pipeline might not need to exist at all, let alone in real-time.

What do they do with it, and when? If the answer is "they check a dashboard every morning" or "it feeds a report on Fridays," streaming won't change the outcome. You're just making the plumbing more expensive for the same water.

What breaks if this data is 1 hour late? 1 day late? This is the only question that actually separates batch from streaming. If the answer to both is "nothing, really," you've got a batch workload wearing a streaming costume.

Most teams discover that 80% of their pipelines are perfectly fine as batch. The remaining 20% is where things get interesting.

Where Speed Actually Matters

Some data genuinely spoils. Like milk, not wine.

Fraud detection is the obvious one. A credit card transaction flagged five minutes after the fact isn't fraud detection — it's fraud notification. The money's already gone. If your fraud pipeline runs in batch, you're writing apology letters instead of blocking doors.

Operational alerts — if your IoT sensor tells you a turbine is overheating, that information has a shelf life measured in seconds. An hourly batch job here isn't just slow. It's negligent.

Pricing and inventory in competitive markets. If your competitor updates prices every 30 seconds and you update every 6 hours, you're not competing. You're spectating.

Multi-consumer event streams where the economics compound. One Kafka topic feeding three downstream systems can be cheaper than three separate batch jobs pulling from the same database. Streaming earns its keep here not through speed, but through architecture elegance.

The pattern: in every case, there's a specific, measurable cost to delay. Not a vague feeling. A number.

The Costs Nobody Puts in the Jira Epic

Streaming infrastructure has a pricing model that looks great on the vendor slide and terrible on the Q3 actuals.

It runs 24/7. Your batch job runs for 4 hours and sleeps. Your streaming job runs all day, all night, weekends, holidays. Even if the total data volume is identical, the compute bill isn't. A team I know went from a $2,000/month batch setup to $11,000/month streaming — processing the same data, delivering it to the same place, consumed at the same cadence.

Debugging goes from archaeology to quantum physics. When a batch job fails, you get a stack trace, a bad record, and a clear rerun path. When a streaming job produces wrong output, it might not "fail" at all. It just silently feeds garbage downstream until someone notices the revenue dashboard looks weird three days later.

Schema changes become a diplomatic negotiation. In batch, you version your code, test on historical data, and push. In streaming, changing a field means coordinating every producer and consumer simultaneously on a live system. Get the ordering wrong and you're debugging data corruption at 2 AM.

The on-call tax is real. Consumer lag, partition skew, broker failovers — these aren't rare edge cases, they're Tuesday. If your team is already stretched thin, streaming doesn't solve the problem. It multiplies it.

A Framework That Actually Helps

Here's what I'd recommend. It takes about two hours with the right people in the room.

Step 1: Name the decisions. For each pipeline, identify the specific business decision it supports. Not "analytics" — the actual decision. "Approve or decline this transaction." "Reorder this SKU." "Alert the maintenance crew." If you can't name it, batch is fine.

Step 2: Time the decisions. How often does that decision get made? Every second? Every hour? Every Monday? Match the pipeline cadence to the decision cadence. Sub-second delivery for a daily decision is waste.

Step 3: Price the delay. What does a one-hour delay cost, in dollars? This is the hardest and most important question. If the answer is "we don't know" or "probably nothing," you don't have a streaming use case. You have a streaming wish.

Step 4: Start with one. Pick the pipeline with the clearest delay cost. Migrate it. Run it alongside the batch version for a full cycle. Compare. Fix what breaks. Then decide whether to expand.

Teams that do this well usually end up with 2–3 streaming pipelines and 15 batch ones. Teams that skip this process end up with 18 streaming pipelines, a burned-out ops team, and a quiet migration back to batch six months later.

It's Not Either/Or

Here's the thing most streaming vendors won't tell you: the best data architectures are boring. They're not all-streaming or all-batch. They're a mix, chosen pipeline by pipeline, based on what the data actually needs to do.

Batch for reporting. Batch for ML training. Batch for anything where "daily" is fast enough and simplicity saves you $8,000 a month in ops overhead.

Streaming for fraud. Streaming for operational alerts. Streaming for the few pipelines where delay has a dollar sign attached.

This is exactly why we built layline.io the way we did. It handles both batch and streaming in the same platform — same workflows, same tooling, same team. You don't have to pick one world and abandon the other. You start with what you have, add real-time where it earns its keep, and keep batch where it makes sense. No rip-and-replace. No either/or.

The architecture diagram won't win any conference talks. But the team goes home at 5 PM and the on-call rotation actually sleeps through the night.

That's the kind of boring that scales.

If you're evaluating which pipelines belong in real-time and which are perfectly fine as batch, layline.io lets you run both in a single platform — so you can validate the business case before committing infrastructure. Talk to us about your specific architecture.

Andrew Tan is a serial entrepreneur and founder of layline.io, building enterprise data processing infrastructure that handles both batch and real-time workloads at scale.